Hosting provider immers·cloud has rolled out a new generation of GPU servers powered by NVIDIA H200, one of the most advanced AI accelerators on the market.

This isn’t just a hardware refresh — it’s a leap forward. These machines can now handle even the heaviest workloads — from 100B+ LLMs to multimodal architectures — running roughly twice as fast as their H100 predecessors.

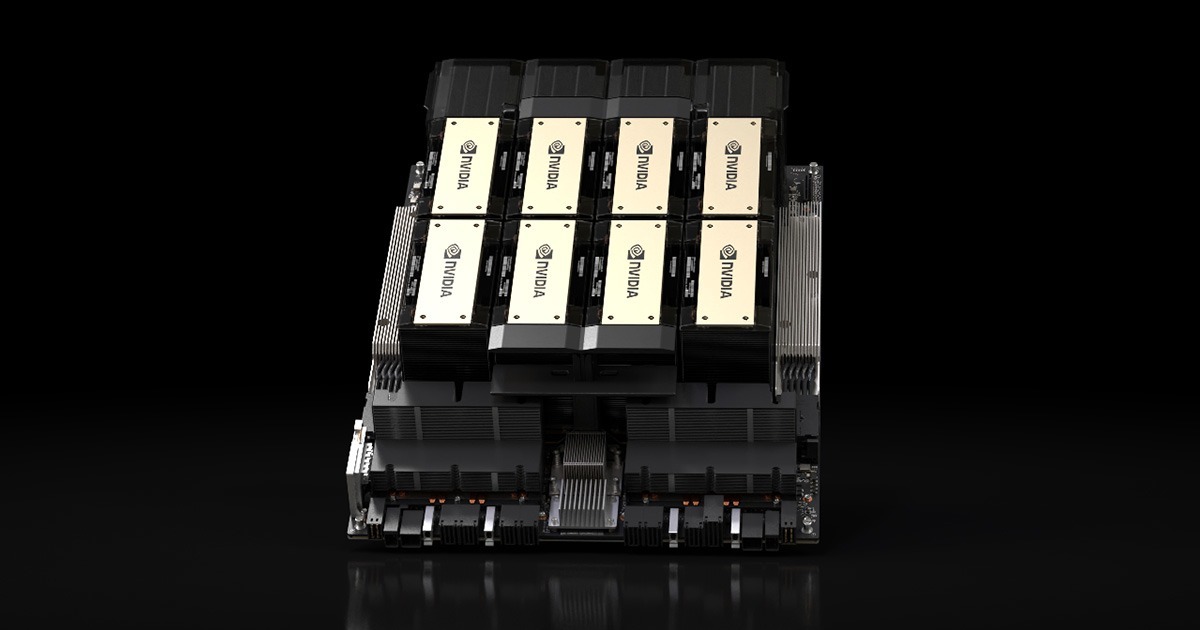

NVIDIA H200. Image: nvidia.com

Under the hood: where silicon meets ambition

Inside, there’s enough power to make your datasets blush.

-

CPU: 2 × Intel Xeon Gold 6548Y+ (5th Gen), up to 4.1 GHz (Turbo Boost), with AVX-512 and DL Boost for faster AI math.

-

Memory: up to 8,192 GB DDR5 ECC Reg (5600 MHz) — twice the bandwidth of DDR4.

-

Storage: 3.2 TB NVMe SSD (Samsung datacenter-grade) — so models load faster than you can say “inference time.”

When 141 GB of VRAM still feels like bragging rights

The GPU specs are where the fun begins:

-

141 GB of HBM3e memory — enough to fit monsters like GPT-OSS-120B;

-

4.8 TB/s memory bandwidth, nearly 2.5× higher than H100;

-

7 NVDEC and 7 JPEG decoders — perfect for multimodal workflows;

-

4th-gen Tensor Cores + Transformer Engine with FP8 support — up to 2× faster in training and inference.

The H200 architecture is fine-tuned for today’s LLMs: efficient with long contexts, quantization-ready, and optimized for distributed inference.

The price tag

Base configuration: 16 vCPU, 128 GB RAM, 160 GB SSD.

-

Hourly rate: ~$6.65

-

Monthly rate: ~$4,305

Available by the hour — for when your experiments deserve a little extra horsepower, but not a full-time commitment.